| 171. Mathur, S.; van der Vleuten, N.; Yager, K.G.; Tsai, E. "VISION: A Modular AI Assistant for Natural Human-Instrument Interaction at Scientific User Facilities" Machine Learning Science and Technology 2025, TBD. [ summary – doi ] [ press: BNL ] |  |

| 170. Yager, K.G. "Towards a Science Exocortex" Digital Discovery 2024, 3, 1933–1957. [ summary – doi ] [ press: BNL - CFN ] |  |

| 169. Doerk, G.S.; Yager, K.G. "Diversifying self-assembled phases in block copolymer thin films via blending" Physical Review Materials 2023, 7, 120301. [ summary – doi ] |  |

| 168. Yager, K.G. "Domain-specific chatbots for science using embeddings" Digital Discovery 2023, 2, 1850–1861. [ summary – doi ] [ press: BNL - phys.org - unite.ai - the science times - futuritech ] |  |

| 167. Barbour, A.; Cai, Y.Q.; Fluerasu, A.; Freychet, G.; Fukuto, M.; Gang, O.; Gann, E.; Laasch, R.; Li, R.; Ocko, B.M.; Tsai, E.H.R.; Wasik, P.; Wiegart, L.; Yager, K.G.; Yang, L.; Zhang, H.; Zhang, Y. "X-Ray Scattering for Soft Matter Research at NSLS-II" Synchrotron Radiation News 2023, 36, 24–30. [ summary – doi ] |  |

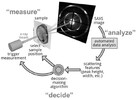

| 166. Yager, K.G.; Majewski, P.W.; Noack, M.M.; Fukuto, M. "Autonomous X-ray Scattering" Nanotechnology 2023, 34, 322001. [ summary – doi ] |  |

| 165. Nowak, S.R.; Tiwale, N.; Doerk, G.S.; Nam, C.-Y.; Black, C.T.; Yager, K.G. "Responsive Blends of Block Copolymers Stabilize the Hexagonally Perforated Lamellae Morphology" Soft Matter 2023, 19, 2594–2604. [ summary – doi ] |  |

| 164. Bae, S.; Noack, M.M.; Yager, K.G. "Surface enrichment dictates block copolymer orientation" Nanoscale 2023, 15, 6901–6912. [ summary – doi ] |  |

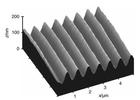

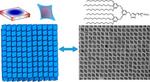

| 163. Doerk, G.S.; Stein, A.; Bae, S.; Noack, M.M.; Fukuto, M.; Yager, K.G. "Autonomous discovery of emergent morphologies in directed self-assembly of block copolymer blends" Science Advances 2023, 9, eadd3687. [ summary – doi ] [ press: BNL - Newswise - ScienceDaily - Phys.org ] |  |

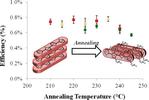

| 162. Yin, Y.; Zhou, Y.; Fu, S.; Zuo, X.; Lin, Y.-C.; Wang, L.; Xue, Y.; Zhang, Y.; Tsai, E.; Hwang, S.; Kisslinger, K.;

Li, M.; Cotlet, M.; Li, T.-D.; Yager, K.G.; Nam, C.-Y.; Rafailovich, M.H. "Enhancing Crystallization in Hybrid Perovskite Solar Cells Using Thermally Conductive Two-Dimensional Boron Nitride Nanosheet Additive" Small 2023, 19, 2207092. [ summary – doi ] |  |

| 161. Yager, K.G. "Spontaneous assembly of hierarchical phases" Nature Nanotechnology 2023, 18, 223–224. [ summary – doi ] |  |

| 160. Russell, S.T.; Bae, S.; Subramanian, A.; Tiwale, N.; Doerk, G.; Nam, C.-Y.; Fukuto, M.; Yager, K.G. "Priming self-assembly pathways by stacking block copolymers" Nature Communications 2022, 13, 6947. [ summary – doi ] [ press: BNL ] |  |

| 159. Zhao, C.; Chung, C.-C.; Jiang, S.; Noack, M.M.; Chen, J.-H.; Manandhar, K.; Lynch, J.; Zhong, H.; Zhu, W.; Maffettone, P.; Olds, D.; Fukuto, M.; Takeuchi, I.; Ghose, S.; Caswell, T.; Yager, K.G.; Chen-Wiegart, Y.-c.K. "Machine-learning for designing nanoarchitectured materials by dealloying" Communications Materials 2022, 3, 86. [ summary – doi ] |  |

| 158. Bae, S.; Yager, K.G. "Chain Redistribution Stabilizes Coexistence Phases in Block Copolymer Blends" ACS Nano 2022, 16, 17107–17115. [ summary – doi ] |  |

| 157. Huang, Z.; Cuniberto, E.; Park, S.; Kisslinger, K.; Wu, Q.; Taniguchi, T.; Watanabe, K.; Yager, K.G.; Shahrjerdi, D. "Mechanisms of Interface Cleaning in Heterostructures Made from Polymer-Contaminated Graphene" Small 2022, 18, 2201248. [ summary – doi ] [ press: BNL ] |  |

| 156. Guan, Z.; Tsai, E.H.R.; Huang, X.; Yager, K.G.; Hong, Q. "Non-Blind Deblurring for Fluorescence: A Deformable Latent Space Approach With Kernel Parameterization" Applications of Computer Vision (WACV) 2022, 1, 711–719. [ summary ] |  |

| 155. Alexander, F.J.; Ang, J; Bilbrey, J.A.; Balewski, J.; Casey, T.; Chard, R.; Choi, J.; Choudhury, S.; Debusschere, B.; DeGennaro, A.M.; Dryden, N.; Ellis, J.A.; Foster, I.; Cardona, C.G.; Ghosh, S.; Harrington, P.; Huang, Y.; Jha, S.; Johnston, T.; Kagawa, A.; Kannan, R.; Kumar, N.; Liu, Z.; Maruyama, N.; Matsuoka, S.; McCarthy, E.; Mohd-Yusof, J.; Nugent, P.; Oyama, Y.; Proffen, T.; Pugmire, D.; Rajamanickam, S.; Ramakrishniah, V.; Schram, M.; Seal, S.K.; Sivaraman, G.; Sweeney,C.; Tan, L.; Thakur, R.; Essen, B.V.; Ward, L.; Welch, P.; Wolf, M.; Xantheas, S.S.; Yager, K.G.; Yoo, S.; Yoon, B.-J. "Co-design Center for Exascale Machine Learning Technologies (ExaLearn)" The International Journal of High Performance Computing Applications 2021, 35, 598–616. [ summary – doi ] |  |

| 154. Toth, K.; Bae, S.; Osuji, C.O.; Yager, K.G.; Doerk, G.S. "Film Thickness and Composition Effects in Symmetric Ternary Block Copolymer/Homopolymer Blend Films: Domain Spacing and Orientation" Macromolecules 2021, 54, 7970–7986. [ summary – doi ] [ press: BNL ] |  |

| 153. Noack, M.M.; Zwart, P.H.; Ushizima, D.M.; Fukuto, M.; Yager, K.G.; Elbert, K.C.; Murray, C.B.; Stein, A.; Doerk, G.S.; Tsai, E.H.R.; Li, R.; Freychet, G.; Zhernenkov, M.; Holman, H.-Y.N.; Lee, S.; Chen, L.; Rotenberg, E.; Weber, T.; Le Goc, Y.; Boehm, M.; Steffens, P.; Mutti, P.; Sethian, J.A. "Gaussian processes for autonomous data acquisition at large-scale synchrotron and neutron facilities" Nature Reviews Physics 2021, 3, 685–697. [ summary – doi ] [ press: BNL - LBNL ] |  |

| 152. Early, J.T.; Block, A.; Yager, K.G.; Lodge, T.P. "Molecular Weight Dependence of Block Copolymer Micelle Fragmentation Kinetics" Journal of the American Chemical Society 2021, 143, 7748–7758. [ summary – doi ] |  |

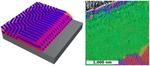

| 151. Majewski, P.W.; Michelson, A.; Cordeiro, M.A.L.; Tian, C.; Ma, C.; Kisslinger, K.; Tian, Y.; Liu, W.; Stach, E.A.; Yager, K.G.; Gang, O. "Resilient three-dimensional ordered architectures assembled from nanoparticles by DNA" Science Advances 2021, 7, eabf0617. [ summary – doi ] [ press: BNL - Columbia ] |  |

| 150. Nowak, S.R.; Lachmayr, K.K.; Yager, K.G.; Sita, L.R. "Stable Thermotropic 3D and 2D Double Gyroid Nanostructures with Sub-2-nm Feature Size from Scalable Sugar-Polyolefin Conjugates" Angewandte Chemie International Edition 2021, 60, 8710–8716. [ summary – doi ] |  |

| 149. Samant, S.; Hailu, S.; Singh, M.; Pradhan, N.; Yager, K.G.; Al-Enizi, A.M.; Raghavan, D.; Karim, A. "Alignment frustration in block copolymer films with block copolymer grafted TiO2 nanoparticles under soft-shear cold zone annealing" Polymers for Advanced Technologies 2021, 32, 2052–2060. [ summary – doi ] |  |

| 148. Huang, X.; Jamonnak, S.; Zhao, Y.; Wang, B.; Hoai, M.; Yager, K.G.; Xu, W. "Interactive Visual Study of Multiple Attributes Learning Model of X-Ray Scattering Images" IEEE Transactions on Visualization and Computer Graphics 2021, 27, 1312–1321. [ summary – doi ] |  |

| 147. Toth, K.; Osuji, C.O.; Yager, K.G.; Doerk, G.S. "High-throughput morphology mapping of self-assembling ternary polymer blends" RSC Advances 2020, 10, 42529–42541. [ summary – doi ] [ press: BNL - Newsbreak ] |  |

| 146. Noack, M.M.; Doerk, G.S.; Li, R.; Streit, J.K.; Vaia, R.A.; Yager, K.G.; Fukuto, M. "Autonomous materials discovery driven by Gaussian process regression with inhomogeneous measurement noise and anisotropic kernels" Scientific Reports 2020, 10, 17663. [ summary – doi ] [ press: BNL - DEIXIS ] |  |

| 145. Kinigstein, E.D.; Tsai, H.; Nie, W.; Blancon, J.-C.; Yager, K.G.; Appavoo, K.; Even, J.; Kanatzidis, M.G.; Mohite, A.D.; Sfeir, M.Y. "Edge States Drive Exciton Dissociation in Ruddlesden–Popper Lead Halide Perovskite Thin Films" ACS Materials Letters 2020, 2, 1360–1367. [ summary – doi ] |  |

| 144. Hill, J.; Campbell, S.; Carini, G.; Chen-Wiegart, Y.-C.K.; Chu, Y.; Fluerasu, A.; Fukuto, M.; Idir, M.; Jakoncic, J.; Jarrige, I.; Siddons, P.; Tanabe, T.; Yager, K.G. "Future trends in synchrotron science at NSLS-II" Journal of Physics: Condensed Matter 2020, 32, 374008. [ summary – doi ] |  |

| 143. Early, J.T.; Yager, K.G.; Lodge, T.P. "Direct Observation of Micelle Fragmentation via In Situ Liquid-Phase Transmission Electron Microscopy" ACS Macro Letters 2020, 9, 756–761. [ summary – doi ] |  |

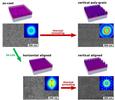

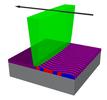

| 142. Shi, L.-Y.; Lan, J.; Lee, S.; Cheng, L.-C.; Yager, K.G.; Ross, C.A. "Vertical Lamellae Formed by Two-Step Annealing of a Rod–Coil Liquid Crystalline Block Copolymer Thin Film" ACS Nano 2020, 14, 4289–4297. [ summary – doi ] |  |

| 141. Chen-Wiegart, Y.-C.K.; Waluyo, I.; Kiss, A.; Campbell, S.; Yang, L.; Dooryhee, E.; Trelewicz, J.R.; Li, Y.; Gates, B.; Rivers, M.; Yager, K.G. "Multimodal Synchrotron Approach: Research Needs and Scientific Vision" Synchrotron Radiation News 2020, 33, 44–47. [ summary – doi ] |  |

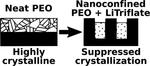

| 140. Zhang, Z.; Ding, J.; Ocko, B.M.; Lhermitte, J.; Strzalka, J.; Choi, C.-H.; Fisher, F.T.; Yager, K.G.; Black, C.T. "Nanoconfinement and Salt Synergistically Suppress Crystallization in Polyethylene Oxide" Macromolecules 2020, 53, 1494–1501. [ summary – doi ] |  |

| 139. Noack, M.M.; Doerk, G.S.; Li, R.; Fukuto, M.; Yager, K.G. "Advances in Kriging-Based Autonomous X-Ray Scattering Experiments" Scientific Reports 2020, 10, 1325. [ summary – doi ] |  |

| 138. Doerk, G.S.; Li, R.; Fukuto, M.; Yager, K.G. "Wet Brush Homopolymers as “Smart Solvents” for Rapid, Large Period Block Copolymer Thin Film Self-Assembly" Macromolecules 2020, 53, 1098–1113. [ summary – doi ] |  |

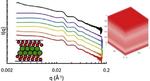

| 137. Tian, Y.; Lhermitte, J.R.; Bain, L.; Vo, T.; Xin, H.L.; Li, H.; Li, R.; Fukuto, M.; Yager, K.G.; Kahn, J.S.; Minevich, B.; Kumar, S.K.; Gang, O. "Ordered three-dimensional nanomaterials using DNA-prescribed and valence-controlled material voxels" Nature Materials 2020, 19, 789–796. [ summary – doi ] [ press: BNL - ScienceDaily - cover ] |  |

| 136. Guan, Z.; Yager, K.G.; Yu, D.; Qin, H. "Multi-Label Visual Feature Learning with Attentional Aggregation" Applications of Computer Vision (WACV) 2020, 1, 1–9. [ summary ] |  |

| 135. Toth, K.; Osuji, C.O.; Yager, K.G.; Doerk, G.S. "Electrospray Deposition Tool: Creating Compositionally Gradient Libraries of Nanomaterials" Review of Scientific Instruments 2020, 91, 013701. [ summary – doi ] |  |

| 134. Nowak, S.R.; Yager, K.G. "Photothermally directed assembly of block copolymers" Advanced Materials Interfaces 2019, 1901679. [ summary – doi ] |  |

| 133. Zhang, Z.; Ding, J.; Ocko, B.M.; Fluerasu, A.; Wiegart, L.; Zhang, Y.; Kobrak, M.; Tian, Y.; Zhang, H.; Lhermitte, J.; Choi, C.-H.; Fisher, F.T.; Yager, K.G.; Black, C.T. "Nanoscale viscosity of confined polyethylene oxide" Physical Review E 2019, 100, 062503. [ summary – doi ] |  |

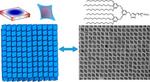

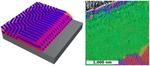

| 132. Elbert, K.C.; Vo, T.; Krook, N.M.; Zygmunt, W.; Park, J.; Yager, K.G.; Composto, R.J.; Glotzer, S.C.; Murray, C.B. "Dendrimer Ligand Directed Nanoplate Assembly" ACS Nano 2019, 13, 14241–14251. [ summary – doi ] |  |

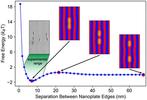

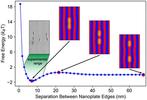

| 131. Krook, N.M.; Tabedzki, C.; Elbert, K.C.; Yager, K.G.; Murray, C.B.; Riggleman, R.A.; Composto, R.J. "Experiments and Simulations Probing Local Domain Bulge and String Assembly of Aligned Nanoplates in a Lamellar Diblock Copolymer" Macromolecules 2019, 52, 8989–8999. [ summary – doi ] |  |

| 130. Huang, X.; Jamonnak, S.; Zhao, Y.; Wang, B.; Nguyen, M.H.; Yager, K.G.; Xu, W. "Visual Understanding of Multiple Attributes Learning Model of X-Ray Scattering Images" International Conference on Computer Vision 2019, 2019, 24. [ summary ] |  |

| 129. Basutkar, M.N.; Majewski, P.W.; Doerk, G.S.; Toth, K.; Osuji, C.; Karim, A.; Yager, K.G. "Aligned Morphologies in Near-Edge Regions of Block Copolymer Thin Films" Macromoleculs 2019, 52, 7224–7233. [ summary – doi ] |  |

| 128. Guan, Z.; Tsai, E.H.R.; Huang, X.; Yager, K.G.; Qin, H. "PtychoNet: Fast and High Quality Phase Retrieval for Ptychography" British Machine Vision Conference 2019, 2019, 278. [ summary – doi ] [ press: CFN ] |  |

| 127. Noack, M.M.; Yager, K.G.; Fukuto, M.; Doerk, G.S.; Li, R.; Sethian, J.A. "A Kriging-Based Approach to Autonomous Experimentation with Applications to X-Ray Scattering" Scientific Reports 2019, 9, 11809. [ summary – doi ] [ press: BNL - BNL top 10 2019 - LBL - Phys.org - Science Daily - lightsources - Newswise - MRS Podcast - DoE ] |  |

| 126. Diwan, R.; Rizvi, A.; Bhattacharyya, S.; Katramatos, D.; Yager, K.G. "Application of Analysis on the Wire to Streaming NSLS-II Beamline Data

" New York Scientific Data Summit 2019, 18, 8538955. [ summary – doi ] |  |

| 125. Lu, F.; Vo, T.; Zhang, Y.; Frenkel, A.; Yager, K.G.; Kumar, S.; Gang, O. "Unusual packing of soft-shelled nanocubes" Science Advances 2019, 5, eaaw2399. [ summary – doi ] [ press: BNL - Science Daily - Materials Today ] |  |

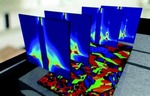

| 124. Wiegart, L.; Doerk, G.S.; Fukuto, M.; Lee, S.; Li, R.; Marom, G.; Noack, M.M.; Osuji, C.O.; Rafailovich, M.H.; Sethian, J.A.; Shmueli, Y.; Torres Arango, M.; Toth, K.; Yager, K.G.; Pindak, R. "Instrumentation for In situ/Operando X-ray Scattering Studies of Polymer Additive Manufacturing Processes" Synchrotron Radiation News 2019, 32, 20–27. [ summary – doi ] |  |

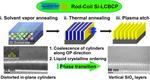

| 123. Lee, S.; Cheng, L.-C.; Yager, K.G.; Mumtaz, M.; Aissou, K.; Ross, C.A. "In Situ Study of ABC Triblock Terpolymer Self-Assembly under Solvent Vapor Annealing" Macromolecules 2019, 52, 1853–1863. [ summary – doi ] |  |

| 122. Shi, L.-Y.; Lee, S.; Cheng, L.-C.; Huang, H.; Liao, F.; Ran, R.; Yager, K.G.; Ross, C.A. "Thin Film Self-Assembly of a Silicon-Containing Rod–Coil Liquid Crystalline Block Copolymer" Macromolecules 2019, 52, 679–689. [ summary – doi ] |  |

| 121. Wang, C.; Wiener, C.G.; Fukuto, M.; Li, R.; Yager, K.G.; Weiss, R.A.; Vogt, B.D. "Strain rate dependent nanostructure of hydrogels with reversible hydrophobic associations during uniaxial extension" Soft Matter 2019, 15, 227–236. [ summary – doi ] |  |

| 120. Liu, W.; Carr, A.J.; Yager, K.G.; Routh, A.F.; Bhatia, S.R. "Sandwich layering in binary nanoparticle films and effect of size ratio on stratification behavior" Journal of Colloid and Interface Science 2019, 538, 209–217. [ summary – doi ] |  |

| 119. Liao, F.; Shi, L.-Y.; Cheng, L.-C.; Lee, S.; Ran, R.; Yager, K.G.; Ross, C.A. "Self-assembly of a silicon-containing side-chain liquid crystalline block copolymer in bulk and in thin films: kinetic pathway of a cylinder to sphere transition" Nanoscale 2019, 11, 285–293. [ summary – doi ] |  |

| 118. Doerk, G.S.; Li, R.; Fukuto, M.; Rodriguez, A.; Yager, K.G. "Thickness Dependent Ordering Kinetics in Cylindrical Block Copolymer/Homopolymer Ternary Blends" Macromolecules 2018, 51, 10259–10270. [ summary – doi ] |  |

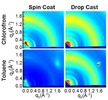

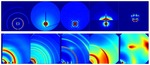

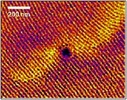

| 117. Mok, J.W.; Hu, Z.; Sun, C.; Barth, I.; Muñoz, R.; Jackson, J.; Terlier, T.; Yager, K.G.; Verduzco, R. "Network-Stabilized Bulk Heterojunction Organic Photovoltaics" Chemistry of Materials 2018, 30, 8314–8321. [ summary – doi ] [ press: Rice - BNL ] |  |

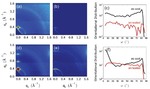

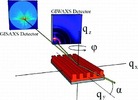

| 116. Liu, J.; Yager, K.G. "Unwarping GISAXS data" IUCrJ 2018, 5, 737–752. [ summary – doi ] [ press: IUCrJ commentary ] |  |

| 115. Zarubin, V.A.; Li, T.-D.; Humagain, S.; Ji, H.; Yager, K.G.; Greenbaum, S.G.; Vuong, L.T. "Improved Anisotropic Thermoelectric Behavior of Poly(3,4-ethylenedioxythiophene):Poly(styrenesulfonate) via Magnetophoresis" ACS Omega 2018, 3, 12554–12561. [ summary – doi ] |  |

| 114. Guan, Z.; Qin, H.; Yager, K.G.; Choo, Y.; Yu, D. "Automatic X-ray Scattering Image Annotation via Double-View Fourier-Bessel Convolutional Networks" British Machine Vision Conference 2018, 0828, 1–10. [ summary – url ] |  |

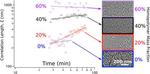

| 113. Carr, A.; Liu, W.; Yager, K.G.; Routh, A.F.; Bhatia, S.R. "Evidence of stratification in binary colloidal films from microbeam X-ray scattering: Toward optimizing the evaporative assembly processes for coatings" ACS Applied Nano Materials 2018, 1, 4211. [ summary – doi ] |  |

| 112. Kobrak, M.N.; Yager, K.G. "X-Ray scattering and physicochemical studies of trialkylamine/carboxylic acid mixtures: nanoscale structure in pseudoprotic ionic liquids and related solutions" Phys. Chem. Chem. Phys. 2018, 20, 18639–18646. [ summary – doi ] |  |

| 111. Gilmore, R.H.; Winslow, S.W.; Lee, E.M.Y.; Ashner, M.N.; Yager, K.G.; Willard, A.P. Tisdale, W.A.

"Inverse Temperature Dependence of Charge Carrier Hopping in Quantum Dot Solids" ACS Nano 2018, 12, 7741. [ summary – doi ] |  |

| 110. Sahu, P.; Yu, D.; Yager, K.G.; Dasari, M.; Qin, H. "In-Operando Tracking and Prediction of Transition in Material System using LSTM" Workshop on Autonomous Infrastructure for Science 2018, 1, 1–4. [ summary – doi ] |  |

| 109. Cheng, L.-C.; Gadelrab, K.R.; Kawamoto, K.; Yager, K.G.; Johnson, J.A.; Alexander-Katz, A.; Ross, C.A. "Templated Self-assembly of a PS-branch-PDMS Bottlebrush Copolymer" Nano Letters 2018, 18, 4360–4369. [ summary – doi ] |  |

| 108. Lee, S.; Cheng, L.-C.; Gadelrab, K.R.; Ntetsikas, K.; Moschovas, D.; Yager, K.G.; Avgeropoulos, A.; Alexander-Katz, A.; Ross, C.A. "Double-Layer Morphologies from a Silicon-Containing ABA Triblock Copolymer" ACS Nano 2018, 12, 6193–6202. [ summary – doi ] |  |

| 107. Zhang, C.; Cavicchi, K.A.; Li, R.; Yager, K.G.; Fukuto, M.; Vogt, B.D. "Thickness Limit for Alignment of Block Copolymer Films Using Solvent Vapor Annealing with Shear" Macromolecules 2018, 51, 4213–4219. [ summary – doi ] |  |

| 106. Pandolfi, R.J.; Allan, D.B.; Arenholz, E.; Barroso-Luque, L.; Campbell, S.I.; Caswell, T.A.; Blair, A.; De Carlo, F.; Fackler, S.; Fournier, A.P.; Freychet, G.; Fukuto, M.; Gürsoy, D.; Jiang, Z.; Krishnan, H.; Kumar, D.; Kline, R.J.; Li, R.; Liman, C.; Marchesini, S.; Mehta, A.; N'Diaye, A.T.; Parkinson, D.Y.; Parks, H.; Pellouchoud, L.A.; Perciano, T.; Ren, F.; Sahoo, S.; Strzalka, J.; Sunday, D.; Tassone, C.J.; Ushizima, D.; Venkatakrishnan, S.; Yager, K.G.; Zwart, P.; Sethian, J.A.; Hexemer, A.

"Xi-cam: a versatile interface for data visualization and analysis" Journal of Synchrotron Radiation 2018, 25, 1261–1270. [ summary – doi ] |  |

| 105. Zhong, W.; Xu, W.; Yager, K.G.; Doerk, G.S.; Zhao, J.; Tian, Y.; Ha, S.; Xie, C.; Zhong, Y.; Mueller, K.; Kleese van Dam, K. "MultiSciView: Multivariate Scientific X-ray Image Visual Exploration with Cross-Data Space Views" Visual Informatics 2018, 2, 14–25. [ summary – doi ] |  |

| 104. Choo, Y.; Majewski, P.W.; Fukuto, M.; Osuji, C.O.; Yager, K.G. "Pathway-engineering for highly-aligned block copolymer arrays" Nanoscale 2018, 10, 416–427. [ summary – doi ] [ press: BNL - BNL top 10 2018 - CFN - Phys.org - TBR ] |  |

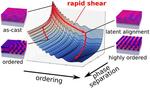

| 103. Doerk, G.S.; Yager, K.G. "Rapid Ordering in “Wet Brush” Block Copolymer/Homopolymer Ternary Blends" ACS Nano 2017, 11, 12326–12336. [ summary – doi ] [ press: BNL - CFN - Phys.org - ScienceDaily ] |  |

| 102. Basutkar, M.N.; Samant, S.; Strzalka, J.; Yager, K.G.; Singh, G.; Karim, A. "Through-thickness Vertically Ordered Lamellar Block Copolymer Thin Films on Unmodified Quartz with Cold Zone Annealing" Nano Letters 2017, 17, 7814–7823. [ summary – doi ] |  |

| 101. Meister, N.; Guan, Z.; Wang, J.; Lashley, R.; Liu, J.; Lhermitte, J.; Yager, K.G.; Qin, H.; Yu, D. "Robust and scalable deep learning for X-ray synchrotron image analysis" New York Scientific Data Summit 2017, 17, 8085045. [ summary – doi ] |  |

| 100. De France, K.J.; Yager, K.G.; Chan, K.J.W.; Corbett, B.; Cranston, E.D.; Hoare, T. "Injectable Anisotropic Nanocomposite Hydrogels Direct In Situ Growth and Alignment of Myotubes" Nano Letters 2017, 17, 6487–6495. [ summary – doi ] [ press: BNL ] |  |

| 99. Doerk, G.S.; Yager, K.G. "Beyond Native Block Copolymer Morphologies" Molecular Systems Design & Engineering 2017, 2, 518–538. [ summary – doi ] |  |

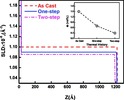

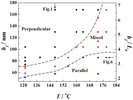

| 98. Ye, C.; Wang, C.; Wang, J.; Wiener, C.G.; Xia, X.; Cheng, S.Z.D.; Li, R.; Yager, K.G.; Fukuto, M.; Vogt, B.D. "Rapid Assessment of Crystal Orientation in Semi-Crystalline Polymer Films using Rotational Zone Annealing and Impact of Orientation on Mechanical Properties " Soft Matter 2017, 13, 7074–7084. [ summary – doi ] |  |

| 97. Lhermitte, J.R.; Stein, A.; Tian, C.; Zhang, Y.; Wiegart, L.; Fluerasu, A.; Gang, O.; Yager, K.G. "Coherent amplification of X-ray scattering from meso-structures" IUCrJ 2017, 4, 604–613. [ summary – doi ] |  |

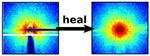

| 96. Liu, J.; Lhermitte, J.; Tian, Y.; Zhang, Z.; Yu, D.; Yager, K.G. "Healing X-ray scattering images" IUCrJ 2017, 4, 455–465. [ summary – doi ] [ press: BNL ] |  |

| 95. Manfrinato, V.R.; Stein, A.; Zhang, L.; Nam, C.-Y.; Yager, K.G.; Stach, E.A.; Black, C.T. "Aberration-Corrected Electron Beam Lithography at the One Nanometer Length Scale" Nano Letters 2017, 17, 4562–4567. [ summary – doi ] [ press: BNL - CFN - DoE - Newswise - Phys.org - IEEE Spectrum - Forbes - cover ] |  |

| 94. Black, C.T.; Forrey, C.; Yager, K.G. "Thickness-dependence of block copolymer coarsening kinetics" Soft Matter 2017, 13, 3275–3283. [ summary – doi ] [ press: cover ] |  |

| 93. Rokhlenko, Y.; Majewski, P.W.; Larson, S.R.; Gopalan, P.; Yager, K.G.; Osuji, C.O. "Implications of Grain Size Variation in Magnetic Field Alignment of Block Copolymer Blends" ACS Macro Letters 2017, 6, 404–409. [ summary – doi ] |  |

| 92. Wang, B.; Yager, K.G.; Yu, Dantong; Hoai, M. "X-ray Scattering Image Classification Using Deep Learning" Applications of Computer Vision (WACV) 2017, 1, 697–704. [ summary – doi ] [ press: iMedPub ] |  |

| 91. Lhermitte, J.R.; Tian, C.; Stein, A.; Rahman, A.; Zhang, Y.; Wiegart, L.; Fluerasu, A.; Gang, O.; Yager, K.G. "Robust X-ray angular correlations for the study of meso-structures" Journal of Applied Crystallography 2017, 50, 805–819. [ summary – doi ] |  |

| 90. Bhaway, S.M; Qiang, Z.; Xia, Y.; Xia, X.; Lee, B.; Yager, K.G.; Zhang, L.; Kisslinger, K.; Chen, Y.-M.; Liu, K.; Zhu, Y.; Vogt, B.D. "Operando Grazing Incidence Small-Angle X-ray Scattering/X-ray Diffraction of Model Ordered Mesoporous Lithium-Ion Battery Anodes" ACS Nano 2017, 11, 1443–1454. [ summary – doi ] |  |

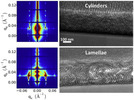

| 89. Rahman, A.; Majewski, P.W.; Doerk, G.; Black, C.T.; Yager, K.G. "Non-native Three-dimensional Block Copolymer Morphologies" Nature Communications 2016, 7, 13988. [ summary – doi ] [ press: BNL - CFN - DoE - Newswise - TBR ] |  |

| 88. Wang, B.; Guan, Z.; Yao, S.; Qin, H.; Nguyen, M.H.; Yager, K.G.; Yu, D. "Deep learning for analysing synchrotron data streams" New York Scientific Data Summit 2016, 16, 7747813. [ summary – doi ] [ press: iMedPub ] |  |

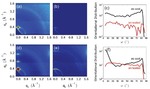

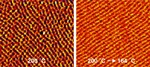

| 87. Samant, S.; Strzalka, J.; Yager, K.G.; Kisslinger, K.; Grolman, D.; Basutkar, M.; Salunke, N.; Singh, G.; Berry, B.; Karim, A. "Ordering Pathway of Block Copolymers under Dynamic Thermal Gradients Studied by in Situ GISAXS" Macromolecules 2016, 49, 8633–8642. [ summary – doi ] |  |

| 86. Bai, W.; Yager, K.G.; Ross, C.A. "In situ GISAXS study of a Si-containing block copolymer under solvent vapor annealing: Effects of molecular weight and solvent vapor composition" Polymer 2016, 101, 176–183. [ summary – doi ] |  |

| 85. Majewski, P.W.; Yager, K.G. "Rapid ordering of block copolymer thin films" Journal of Physics: Condensed Matter 2016, 28, 403002. [ summary – doi ] |  |

| 84. Goh, T.; Huang, J.-S.; Yager, K.G.; Sfeir, M.Y.; Nam, C.-Y.; Tong, X.; Guard, L.M.; Melvin, P.R.; Antonio, F.; Bartolome, B.G.; Lee, M.L.; Hazari, N.; Taylor, A.D. "Quaternary Organic Solar Cells Enhanced by Cocrystalline Squaraines with Power Conversion Efficiencies >10%" Advanced Energy Materials 2016, 6, 1600660. [ summary – doi ] |  |

| 83. Stein, A.; Wright, G.; Yager, K.G.; Doerk G.S.; Black, C.T. "Selective directed self-assembly of coexisting morphologies using block copolymer blends" Nature Communications 2016, 7, 12366. [ summary – doi ] [ press: BNL - video - DoE - Newswise ] |  |

| 82. De France, K.J.; Yager, K.G.; Hoare, T.; Cranston, E.D. "Cooperative Ordering and Kinetics of Cellulose Nanocrystal Alignment in a Magnetic Field" Langmuir 2016, 32, 7564–7571. [ summary – doi ] [ press: CFN - cover ] |  |

| 81. Kim, B.; Chiu, C.-Y.; Kang, S. J.; Kim, K. S.; Lee, G.-H.; Chen, Z.; Ahn, S.; Yager, K.G.; Ciston, J.; Nuckolls, C.; Schiros, T. "Vertically grown nanowire crystals of dibenzotetrathienocoronene (DBTTC) on large-area graphene" RSC Advances 2016, 6, 59582–59589. [ summary – doi ] |  |

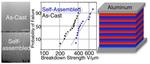

| 80. Samant, S.P.; Grabowski, C.A.; Kisslinger, K.; Yager, K.G.; Yuan, G.; Satija, S.K.; Durstock, M.F.; Raghavan, D.; Karim, A. "Directed Self-Assembly of Block Copolymers for High Breakdown Strength Polymer Film Capacitors" ACS Applied Materials & Interfaces 2016, 8, 7966–7976. [ summary – doi ] |  |

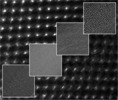

| 79. Liu, W.; Tagawa, M.; Xin, H.L.; Wang, T.; Emamy, H. Li, H.; Yager, K.G.; Starr, F.W.; Tkachenko, A.V.; Gang, O. "Diamond family of nanoparticle superlattices" Science 2016, 351, 582–586. [ summary – doi ] [ press: BNL - CFN - DoE - Phys.org - Science ] |  |

| 78. Majewski, P.W.; Yager, K.G. "Reordering Transitions during Annealing of Block Copolymer Cylinder Phases" Soft Matter 2016, 12, 281–294. [ summary – doi ] |  |

| 77. Rokhlenko, Y.; Gopinadhan, M.; Osuji, C.O.; Zhang, K.; O’Hern, C.S.; Larson, S.R.; Gopalan, P.; Majewski, P.W.; Yager, K.G. "Magnetic Alignment of Block Copolymer Microdomains by Intrinsic Chain Anisotropy" Physical Review Letters 2015, 115, 258302. [ summary – doi ] [ press: APS ] |  |

| 76. Bai, W.; Yager, K.G.; Ross, C.A. "In Situ Characterization of the Self-Assembly of a Polystyrene–Polydimethylsiloxane Block Copolymer during Solvent Vapor Annealing" Macromolecules 2015, 48, 8574–8584. [ summary – doi ] |  |

| 75. Smith, K.A.; Lin, Y.-H.; Mok, J.W.; Yager, K.G.; Strzalka, J.; Nie, W.; Mohite, A.D.; Verduzco, R. "Molecular Origin of Photovoltaic Performance in Donor-block-Acceptor All-Conjugated Block Copolymers" Macromolecules 2015, 48, 8346–8353. [ summary – doi ] |  |

| 74. Bizien, T.;Ameline, J.-C.; Yager, K.G.; Marchi, V.; Artzner, F. "Self-Organization of Quantum Rods Induced by Lipid Membrane Corrugations" Langmuir 2015, 31, 12148–12154. [ summary – doi ] |  |

| 73. Mok, J.W.; Lin, Y.-H.; Yager, K.G.; Mohite, A.D.; Nie, W.; Darling, S.B.; Lee, Y.; Gomez, E.; Gosztola, D.; Shcaller, R.D.; Verduzco, R. "Linking Group Influences Charge Separation and Recombination in All-Conjugated Block Copolymer Photovoltaics" Advanced Functional Materials 2015, 25, 5578–5585. [ summary – doi ] [ press: cover ] |  |

| 72. Page, K.A.; Shin, J.W.; Eastman, S.A.; Rowe, B.W.; Kim, S.; Kusoglu, A.; Yager, K.G.; Stafford, G.R. "In Situ Method for Measuring the Mechanical Properties of Nafion Thin Films during Hydration Cycles" ACS Applied Materials & Interfaces 2015, 7, 17874–17883. [ summary – doi ] |  |

| 71. Majewski, P.W.; Yager, K.G. "Latent Alignment in Pathway-Dependent Ordering of Block Copolymer Thin Films" Nano Letters 2015, 15, 5221–5228. [ summary – doi ] [ press: BNL ] |  |

| 70. Majewski, P.W.; Yager, K.G. "Block Copolymer Response to Photothermal Stress Fields" Macromolecules 2015, 48, 4591–4598. [ summary – doi ] |  |

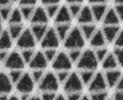

| 69. Majewski, P.W.; Rahman, A.; Black, C.T.; Yager, K.G. "Arbitrary lattice symmetries via block copolymer nanomeshes" Nature Communications 2015, 6, 7448. [ summary – doi ] [ press: BNL - BNL Lecture - video ] |  |

| 68. Yager, K.G.; Forrey, C.; Singh, G.; Satija, S.K.; Page, K.A.; Patton, D.L.; Douglas, J.F.; Jones, R.L.; Karim, A. "Thermally-Induced Transition of Lamellae Orientation in Block-Copolymer Films on ‘Neutral’ Nanoparticle-Coated Substrates" Soft Matter 2015, 11, 5154–5167. [ summary – doi ] |  |

| 67. Lu, F.; Yager, K.G.; Zhang, Y.; Xin, H.; Gang, O. "Superlattices assembled through shape-induced directional binding" Nature Communications 2015, 6, 6912. [ summary – doi ] [ press: BNL - DoE ] |  |

| 66. Majewski, P.W.; Yager, K.G. "Millisecond Ordering of Block-Copolymer Films via Photo-Thermal Gradients" ACS Nano 2015, 9, 3896–3906. [ summary – doi ] [ press: BNL ] |  |

| 65. Fischer, F.S.U.; Trefz, D.; Back, J.; Kayunki, N.; Tornow, B.; Albrecht, S.; Yager, K.G.; Singh, G.; Karim, A.; Neher, D.; Brinkmann, M.; Ludwigs, S. "Highly Crystalline Films of PCPDTBT with Branched Side Chains by Solvent Vapor Crystallization: Influence on Opto-Electronic Properties" Advanced Materials 2015, 27, 1223–1228. [ summary – doi ] |  |

| 64. Lu, X.; Hlaing, H.; Nam, C.-Y.; Yager, K.G.; Black, C.T.; Ocko, B.M. "Molecular Orientation and Performance of Nanoimprinted Polymer-based Blend Thin Film Solar Cells" Chemistry of Materials 2015, 27, 60–66. [ summary – doi ] |  |

| 63. Weidman, M.C.; Yager, K.G.; Tisdale, W.A. "Interparticle Spacing and Structural Ordering in Superlattice PbS Nanocrystal Solids Undergoing Ligand Exchange" Chemistry of Materials 2015, 27, 474–482. [ summary – doi ] [ press: cover ] |  |

| 62. Li, F.; Yager, K.G.; Dawson, N.M.; Jiang, Y.-Bi.; Malloy, K.J.; Qin, Y. "Nano-Structuring Polymer/Fullerene Composites through the Interplay of Conjugated Polymer Crystallization, Block Copolymer Self-Assembly and Complementary Hydrogen Bonding Interactions" Polymer Chemistry 2015, 6, 721–731. [ summary – doi ] |  |

| 61. Cativo, H.M.; Kim, D.K.; Riggleman, R.A.; Yager, K.G.; Nonnenmann, S.S.; Chao, H.; Bonnell, D.A.; Black, C.T.; Kagan, C.R.; Park, S.-J. "Air–Liquid Interfacial Self-Assembly of Conjugated Block Copolymers into Ordered Nanowire Arrays" ACS Nano 2014, 8, 12755–12762. [ summary – doi ] |  |

| 60. Bhaway, S.M.; Kisslinger, K.; Zhang, L.; Yager, K.G.; Schmitt, A.L.; Mahanthappa, M.K.; Karim, A.; Vogt, B.D. "Mesoporous Carbon–Vanadium Oxide Films by Resol-Assisted, Triblock Copolymer-Templated Cooperative Self-Assembly" ACS Applied Materials & Interfaces 2014, 6, 19288–19298. [ summary – doi ] |  |

| 59. Yager, K.G.; Lai, E.; Black, C.T. "Self-Assembled Phases of Block Copolymer Blend Thin Films" ACS Nano 2014, 8, 10582–10588. [ summary – doi ] |  |

| 58. Yager, K.G.; Majewski, P.W. "Metrics of graininess: robust quantification of grain count from the non-uniformity of scattering rings" Journal of Applied Crystallography 2014, 47, 1855–1865. [ summary – doi ] |  |

| 57. Kim, C.-H.; Hlaing, H.; Payne, M.M.; Yager, K.G.; Bonnassieux, Y.; Horowitz, G.; Anthony, J.E.; Kymissis, I. "Strongly Correlated Alignment of Fluorinated 5,11-Bis(triethylgermylethynyl)anthradithiophene Crystallites in Solution-Processed Field-Effect Transistors" ChemPhysChem 2014, 15, 2913–2916. [ summary – doi ] |  |

| 56. Li, F.; Yager, K.G.; Dawson, N.M.; Jian, Y.-B.; Malloy, K.J.; Qin, Y. "Stable and Controllable Polymer/Fullerene Composite Nanofibers through Cooperative Noncovalent Interactions for Organic Photovoltaics" Chemistry of Materials 2014, 26, 3747–3756. [ summary – doi ] |  |

| 55. Smith, K.A.; Stewart, B.; Yager, K.G.; Strzalka, J.; Verduzco, R. "Control of all-conjugated block copolymer crystallization via thermal and solvent annealing" Journal of Polymer Science Part B: Polymer Physics 2014, 52, 900–906. [ summary – doi ] |  |

| 54. Srivastava, S.; Nykypanchuk, D.; Fukuto, M.; Halverson, J.D.; Tkachenko, A.V.; Yager, K.G.; Gang, O. "Two-Dimensional DNA-Programmable Assembly of Nanoparticles at Liquid Interfaces" Journal of the American Chemical Society 2014, 136, 8323–8332. [ summary – doi ] [ press: BNL ] |  |

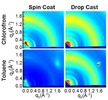

| 53. Lin, Y.-H.; Yager, K.G.; Stewart, B.; Verduzco, R. "Lamellar and liquid crystal ordering in solvent-annealed all-conjugated block copolymers " Soft Matter 2014, 10, 3817–3825. [ summary – doi ] |  |

| 52. Huang, H.; Yoo, S.; Kaznatcheev, K.; Yager, K.G.; Lu, F.; Yu, D.; Gang, O. Fluerasu, A.; Qin, H. "Diffusion-based Clustering Analysis of Coherent X-ray Scattering Patterns of Self-assembled Nanoparticles

" Proceedings of the ACM Symposium on Applied Computing

2014, 29, 85–90. [ summary – doi ] |  |

| 51. Kiapour, M.H.; Yager, K.G.; Berg, A.C.; Berg, T.L. "Materials discovery: Fine-grained classification of X-ray scattering images " Applications of Computer Vision (WACV) 2014, 1, 933–940. [ summary – doi ] [ press: iMedPub ] |  |

| 50. Zhang, X.; Yager, K.G.; Douglas, J.F.; Karim, A. "Suppression of target patterns in domain aligned cold-zone annealed block copolymer films with immobilized film-spanning nanoparticles" Soft Matter 2014, 10, 3656–3666. [ summary – doi ] |  |

| 49. Krejci, A.J.; Yager, K.G.; Ruggiero, C.; Dickerson, J.H. "X-ray scattering as a liquid and solid phase probe of ordering within sub-monolayers of iron oxide nanoparticles fabricated by electrophoretic deposition" Nanoscale 2014, 6, 4047–4051. [ summary – doi ] |  |

| 48. Chul-Ho Lee, C.-H.; Schiros, T.; Santos, E.J.G.; Kim, B.; Yager, K.G.;Kang, S.J.; Lee, S.; Yu, J.; Watanabe, K.; Taniguchi, T.; Hone, J.; Kaxiras, E.; Nuckolls, C.; Kim, P. "Epitaxial Growth of Molecular Crystals on van der Waals Substrates for High-Performance Organic Electronics" Advanced Materials 2014, 26, 2812–2817. [ summary – doi ] |  |

| 47. Johnston, D.E.; Yager, K.G.; Hlaing, H.; Lu, X.; Ocko, B.M.; Black, C.T. "Nanostructured Surfaces Frustrate Polymer Semiconductor Molecular Orientation" ACS Nano 2014, 8, 243–249. [ summary – doi ] |  |

| 46. Yager, K.G.; Zhang, Y.; Lu, F.; Gang, O. "Periodic lattices of arbitrary nano-objects: modeling and applications for self-assembled systems" Journal of Applied Crystallography 2014, 47, 118–129. [ summary – doi ] |  |

| 45. Smith, K.A.; Pickel, D.L.; Yager, K.G.; Kisslinger, K.; Verduzco, R. "Conjugated block copolymers via functionalized initiators and click chemistry" Journal of Polymer Science Part A: Polymer Chemistry 2014, 52, 154–163. [ summary – doi ] |  |

| 44. Li, F.; Yager, K.G.; Dawson, N.M.; Yang, J.; Malloy, K.J.; Qin, Y. "Complementary Hydrogen Bonding and Block Copolymer Self-Assembly in Cooperation toward Stable Solar Cells with Tunable Morphologies" Macromolecules 2013, 46, 9021–9031. [ summary – doi ] |  |

| 43. Zhang, Y.; Lu, F.; Yager, K.G.; van der Leli, D.; Gang, O. "A general strategy for the DNA-mediated self-assembly of functional nanoparticles into heterogeneous systems" Nature Nanotechnology 2013, 8, 865–872. [ summary – doi ] [ press: BNL - BNL Lecture - video ] |  |

| 42. Xue, J.; Singh, G.; Qiang, Z.; Yager, K.G.; Karim, A.; Vogt, B.D. "Facile control of long range orientation in mesoporous carbon films with thermal zone annealing velocity" Nanoscale 2013, 5, 12440–12447. [ summary – doi ] |  |

| 41. Kim, S.; Dura, J.A.; Page, K.A.; Rowe, B.W.; Yager, K.G.; Soles, C.L. "Surface-Induced Nanostructure and Water Transport of Thin Proton-Conducting Polymer Films" Macromolecules 2013, 46, 5630–5637. [ summary – doi ] |  |

| 40. Vial, S.; Nykypanchuk, D.; Yager, K.G.; Tkachenko, A.V.; Gang, O. "Linear Mesostructures in DNA–Nanorod Self-Assembly" ACS Nano 2013, 7, 5437–5445. [ summary – doi ] [ press: BNL ] |  |

| 39. Singh, G.; Batra, S.; Zhang, R.; Yuan, H.; Yager, K.G.; Cakmak, M.; Berry, B.; Karim, A. "Large-Scale Roll-to-Roll Fabrication of Vertically Oriented Block Copolymer Thin Films" ACS Nano 2013, 7, 5291–5299. [ summary – doi ] |  |

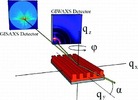

| 38. Lu, X.; Yager, K.G.; Johnston, D.; Black, C.T.; Ocko, B.M. "Grazing-incidence transmission X-ray scattering: surface scattering in the Born approximation" Journal of Applied Crystallography 2013, 46, 165–172. [ summary – doi ] [ press: BNL - cover ] |  |

| 37. Kelly, J.G.; He, W.; Somarajan, S.; Yager, K.G.; Dickerson, J.H. "Wide angle x-ray diffraction studies of nanocrystalline lead europium sulfide" Materials Letters 2012, 89, 198–201. [ summary – doi ] |  |

| 36. Singh, G.; Yager, K.G.; Berry, B.; Kim, H.-C.; Karim, A. "Dynamic Thermal Field-Induced Gradient Soft-Shear for Highly Oriented Block Copolymer Thin Films" ACS Nano 2012, 6 (11), 10335–10342. [ summary – doi ] |  |

| 35. Eastman, S.A.; Yager, K.G.; Kim, S.; Page, K.A.; Rowe, B.W.; Kang, S.; Soles, C.L. "Effect of Confinement on Structure, Water Solubility, and Water Transport in Nafion Thin Films" Macromolecules 2012, 45 (19), 7920–7930. [ summary – doi ] |  |

| 34. Singh, G.; Yager, K.G.; Smilgies, D.-M.; Kulkarni, M.M.; Bucknall, D.G.; Karim, A. "Tuning Molecular Relaxation for Vertical Orientation in Cylindrical Block Copolymer Films via Sharp Dynamic Zone Annealing" Macromolecules 2012, 17, 7107–7117. [ summary – doi ] |  |

| 33. Allen, J.E.; Ray, B.; Khan, M.R.; Yager, K.G.; Alam, M.A.; Black, C.T. "Self-assembly of single dielectric nanoparticle layers and integration in polymer-based solar cells" Applied Physics Letters 2012, 101, 063105. [ summary – doi ] |  |

| 32. Johnston, D.E.; Yager, K.G.; Nam, C.-Y.; Ocko, B.M.; Black, C.T. "One-Volt Operation of High-Current Vertical Channel Polymer Semiconductor Field-Effect Transistors" Nano Letters 2012, 8, 4181–4186. [ summary – doi ] [ press: BNL ] |  |

| 31. Kang, S.J.; Kim, J.B.; Chiu, C.-Y.; Ahn, S.; Schiros, T.; Lee, S.S.; Yager, K.G.; Toney, M.F.; Loo, Y.-L.; Nuckolls, C. "A Supramolecular Complex in Small-Molecule Solar Cells based on Contorted Aromatic Molecules" Angewandte Chemie International Edition 2012, 34, 8594–8597. [ summary – doi ] |  |

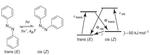

| 30. Mahimwalla, Z.; Yager, K.G.; Mamiya, J.-i.; Shishido, A.; Priimagi, A.; Barrett, C.J. "Azobenzene photomechanics: prospects and potential applications" Polymer Bulletin 2012, 69 (8), 967–1006. [ summary – doi ] |  |

| 29. Schedel, M.; Yager, K.G. "Hearing Nano-Structures: A Case Study in Timbral Sonification" International Conference on Auditory Display 2012, 18, 95–98. [ summary ] [ press: SBU - Sounding Out! - NPR - PNAS - BNL - PubSci ] |  |

| 28. Kulkarni, M.M.; Yager, K.G.; Sharma, A.; Karim, A. "Combinatorial Block Copolymer Ordering on Tunable Rough Substrates" Macromolecules 2012, 45, 4303–4314. [ summary – doi ] |  |

| 27. Allen, J.E.; Yager, K.G.; Nam, C.-Y.; Hlaing, H.; Ocko, B.M.; Black, C.T. "Implementing nanometer-scale confinement in organic semiconductor bulk heterojunction solar cells" Journal of Photonics for Energy 2012, 2, 021008. [ summary – doi ] |  |

| 26. Schiros, T.; Mannsfeld, S.; Chiu, C.-y.; Yager, K.G.; Ciston, J.; Gorodetsky, A.A.; Palma, M.; Bullard, Z.; Kramer, T.; Delongchamp, D.; Fischer, D.; Kymissis, I.; Toney, M.F.; Nuckolls, C. "Reticulated Organic Photovoltaics" Advanced Functional Materials 2012, 22, 1167–1173. [ summary – doi ] |  |

| 25. Schedel, M.; Fox-Gieg, N.; Yager, K.G. "A Modern Instantiation of Schillinger's Dance Notation: Choreographing with Mouse, iPad, KBow, and Kinect" Contemporary Music Review 2011, 30, 179–186. [ summary – doi ] |  |

| 24. Allen, J.E.; Yager, K.G.; Nam, C.-Y.; Ocko, B.M.; Black, C.T. "Enhanced charge collection in confined bulk heterojunction organic solar cells" Applied Physics Letters 2011, 99, 163301. [ summary – doi ] [ press: cover ] |  |

| 23. Hlaing, H.; Lu, X.; Hofmann, T.; Yager, K.G.; Black, C.T.; Ocko, B.M. "Nanoimprint-Induced Molecular Orientation in Semiconducting Polymer Nanostructures" ACS Nano 2011, 5, 7532–7538. [ summary – doi ] |  |

| 22. Wolcott, A.; Doyeux, V.; Nelson, C.A.; Gearba, R.; Lei, K.W.; Yager, K.G.; Dolocan, A.D.; Williams, K.; Nguyen, D.; Zhu, X.-Y. "Anomalously Large Polarization Effect Responsible for Excitonic Red Shifts in PbSe Quantum Dot Solids" Journal of Physical Chemistry Letters 2011, 2, 795–800. [ summary – doi ] |  |

| 21. Forrey, C.; Yager, K.G.; Broadaway, S.P. "Molecular Dynamics Study of the Role of the Free Surface on Block Copolymer Thin Film Morphology and Alignment" ACS Nano 2011, 5, 2895–2907. [ summary – doi ] |  |

| 20. Galley, W.C.; Tanchak, O.M.; Yager, K.G.; Wilczek-Vera, G. "Excited-State Processes in Slow Motion: An Experiment in the Undergraduate Laboratory" Journal of Chemical Education 2010, 87, 1252–1256. [ summary – doi ] |  |

| 19. Zhang, X.; Yager, K.G.; Fredin, N.J.; Ro, H.W.; Jones, R.L.; Karim, A.; Douglas, J.F. "Thermally Reversible Surface Morphology Transition in Thin Diblock Copolymer Films" ACS Nano 2010, 7, 3653–3660. [ summary – doi ] |  |

| 18. Song, L.; Feng, D.; Fredin, N.J.;Yager, K.G.; Jones, R.L.; Wu, Q.; Zhao, D.; Vogt, B.D. "Challenges in fabrication of mesoporous carbon films with ordered cylindrical pores via phenolic oligomer self-assembly with triblock copolymers" ACS Nano 2010, 1, 189–198. [ summary – doi ] |  |

| 17. Song, L.; Feng, D.; Campbell, C.G.; Gu, D.; Forster, A.M.; Yager, K.G.; Fredin, N.; Lee, H.-J.; Jones, R.L.; Zhao, D.; Vogt, B.D. "Robust conductive mesoporous carbon–silica composite films with highly ordered and oriented orthorhombic structures from triblock-copolymer template co-assembly" Journal of Materials Chemistry 2010, 20, 1691–1701. [ summary – doi ] |  |

| 16. Zhang, X.; Yager, K.G.; Kang, S.; Fredin, N.J.; Akgun, B.; Satija, S.; Douglas, J.F.; Karim, A.; Jones, R.L. "Solvent Retention in Thin Spin-Coated Polystyrene and Poly(methyl methacrylate) Homopolymer Films Studied By Neutron Reflectometry" Macromolecules 2010, 2, 1117–1123. [ summary – doi ] |  |

| 15. Yager, K.G.; Fredin, N.J.; Zhang, X.; Berry, B.C.; Karim, A.; Jones, R.L. "Evolution of block-copolymer order through a moving thermal zone" Soft Matter 2010, 1, 92–99. [ summary – doi ] |  |

| 14. Zhang, X.; De Paoli Lacerda, S.H.; Yager, K.G.; Berry, B.C.; Douglas, J.F.; Jones, R.L.; Karim, A. "Target Patterns Induced by Fixed Nanoparticles in Block Copolymer Films" ACS Nano 2009, 3, 2115–2120. [ summary – doi ] |  |

| 13. Yager, K.G.; Berry, B.C.; Page, K.; Patton, D.; Karim, A.; Amis, E.J. "Disordered Nanoparticle Interfaces for Directed Self-Assembly" Soft Matter 2009, 5, 622–628. [ summary – doi ] [ press: APS - cover ] |  |

| 12. Zhang, X.; Berry, B.C.; Yager, K.G.; Kim, S.; Jones, R.L.; Satija, S.; Pickel, D.L.; Douglas, J.F.; Karim, A. "Surface Morphology Diagram for Cylinder-Forming Block Copolymer Thin Films" ACS Nano 2008, 2, 2331–2341. [ summary – doi ] |  |

| 11. Tanchak, O.M.; Yager, K.G.; Fritzsche, H.; Harroun, T.; Katsaras, J.; Barrett, C.J. "Ion distribution in multilayers of weak polyelectrolytes: A neutron reflectometry study" Journal of Chemical Physics 2008, 129, 084901. [ summary – doi ] |  |

| 10. Barrett, C.J.; Mamiya, J.; Yager, K.G.; Ikeda, T. "Photo-mechanical Effects in Azobenzene-containing Soft Materials" Soft Matter 2007, 3, 1249–1261. [ summary – doi ] [ press: cover ] |  |

| 9. Yager, K.G.; Barrett, C.J. "Confinement of Surface Patterning in Azo-Polymer Thin Films" Journal of Chemical Physics 2007, 126, 094908. [ summary – doi ] |  |

| 8. Yager, K.G.; Barrett, C.J. "Photomechanical Surface Patterning in Azo-Polymer Materials" Macromolecules 2006, 39, 9320. [ summary – doi ] |  |

| 7. Yager, K.G.; Tanchak, O.M.; Godbout, C.; Fritzsche, H.; Barrett, C.J. "Photomechanical Effects in Azo-Polymers Studied by Neutron Reflectometry" Macromolecules 2006, 39, 9311. [ summary – doi ] |  |

| 6. Yager, K.G.; Barrett, C.J. "Novel Photoswitching using Azobenzene Functional Materials" Journal of Photochemistry and Photobiology A: Chemistry 2006, 182, 250. [ summary – doi ] |  |

| 5. Tanchak, O.M.; Yager, K.G.; Fritzsche, H.; Harroun, T.A.; Katsaras, J.; Barrett, C.J. "Water Distribution in Multilayers of Weak Polyelectrolytes" Langmuir 2006, 22, 5137. [ summary – doi ] |  |

| 4. Yager, K.G.; Tanchak, O.M.; Barrett, C.J.; Watson, M.J.; Fritzsche, H. "Temperature-controlled neutron reflectometry sample cell suitable for study of photoactive thin films" Review of Scientific Instruments 2006, 77, 045106. [ summary – doi ] |  |

| 3. Harroun, T.A.; Fritzsche, H.; Watson, M.J.; Yager, K.G.; Tanchak, O.M.; Barrett, C.J.; Katsaras, J. "Variable temperature, relative humidity (0%–100%), and liquid neutron reflectometry sample cell suitable for polymeric and biomimetic materials" Review of Scientific Instruments 2005, 76, 065101. [ summary – doi ] |  |

| 2. Yager, K.G.; Barrett, C.J. "Temperature Modelling of Laser-Irradiated Azo Polymer Films" Journal of Chemical Physics 2004, 120, 1089–1096. [ summary – doi ] |  |

| 1. Yager, K.G.; Barrett, C.J. "All-Optical Patterning of Azo Polymer Films" Current Opinion in Solid State and Materials Science 2001, 5, 487–494. [ summary – doi ] |  |